We recently shared the exciting performance results from our recent collaboration with Ampere. You can view our recent blog post that published the outcome of Ampere’s Altra® Max Platform using LINBIT SDS® to manage and replicate some ultra-high-speed Samsung PM1733a SSD drives. You can also see our Tech Guide: Benchmarking On Ampere Altra Max Platform With LINBIT SDS.

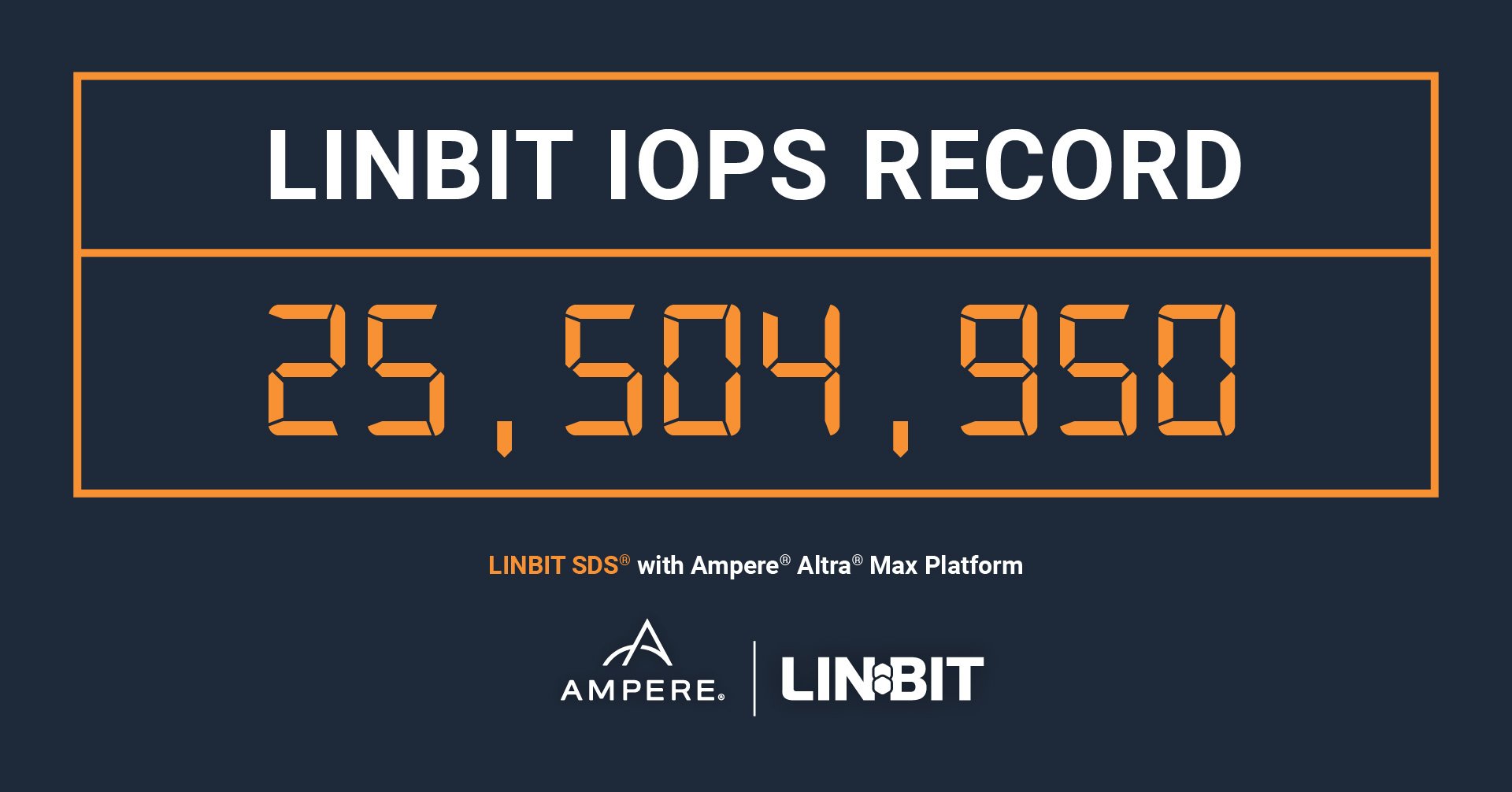

The technical details and precise numbers aside, the bottom line is that LINBIT SDS has contributed to a new IOPS record again. In 2020, LINBIT® measured 14.8 million IOPS on a 12-node cluster to record the highest storage performance reached by a hyper-converged system on the market. And now, LINBIT SDS has helped Ampere’s Altra Max Platform record a staggering 25.5 million on a cluster of three servers.

The results are impressive, and technically-minded readers will enjoy diving into the data, but what does our achievement mean in simple terms? How is it significant to other businesses who use our software?

Reducing TCO (Total Cost of Ownership) with LINBIT

The bigger you can raise the performance measurements while reducing hardware costs and quantities, the better for a business. We can help you reduce TCO regarding both hardware and environmental costs, and here are the direct benefits of doing so:

- Lowering hardware and environmental costs results in significant cost savings over the life of an IT system. By reducing the costs associated with acquiring, deploying, and maintaining hardware, organizations can save money for other important initiatives.

- Increased efficiency. By implementing more energy-efficient hardware and reducing the power required to operate an IT system, organizations can reduce operational costs and free up resources for other vital tasks.

- A positive impact on the environment. By reducing the amount of power and resources required to operate an IT system, organizations can reduce their carbon footprint and contribute to a more sustainable future.

- Improve the performance of an IT system. By investing in more efficient hardware and reducing the need for maintenance, organizations can ensure that their systems run more smoothly and reliably.

- A competitive advantage. By reducing costs and improving efficiency, organizations can focus on delivering better products and services to their customers, which can help them stay ahead of their competitors in the marketplace.

In Conclusion

Lowering TCO can bring several benefits, including cost savings, increased efficiency, improved environmental sustainability, better performance, and a competitive advantage. In addition, by investing in more efficient hardware and adopting sustainable practices, organizations can improve their bottom line while contributing to a more sustainable future. Check out our storage TCO calculator to compare LINBIT against the industry standard.

By achieving a record-breaking performance on two separate occasions, LINBIT’s software is proven effective in delivering better-performing software to help businesses lower TCO. Contact us today to discuss anything within this article. We’re always online and always here to help.