In this video Matt Kereczman from LINBIT® combines components of LINBIT SDS and LINBIT to demonstrate extending an existing LINSTOR® managed DRBD® volume to a disaster recovery node, located in a geographically-separated datacenter via LINSTOR and DRBD proxy.

Watch the video:

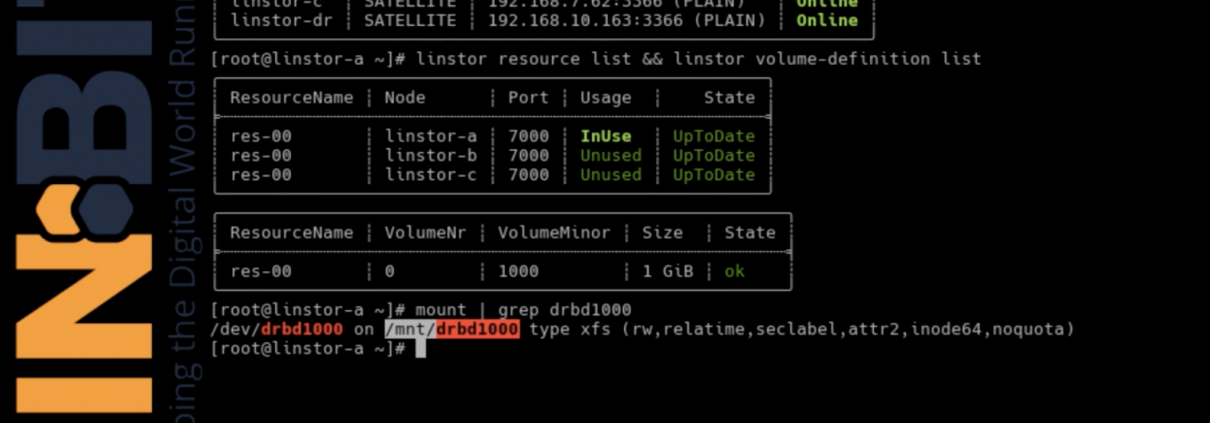

He’s already created a LINSTOR cluster on four nodes: linstor-a, linstor-b, linstor-c and linstor-dr.

You can see that linstor-dr is in a different network than our other three nodes. This network exists in the DR DC, which is connected to our local DC via a 40Mb/s WAN link.

He has a single DRBD resource defined, which is currently replicated synchronously between the three peers in our local datacenter. He’s listed out his LINSTOR-managed resources and volumes which is currently mounted on linstor-a:

Before he adds a replica of this volume to the DR node in his DR datacenter, he’ll quickly test the write throughput of his DRBD device, so he has a baseline of how well it should perform.

He uses the dd to test.The test results in an 85-95Mb/s of throughput from his LINSTOR managed DRBD volume before adding his DR replica. This is as fast as the storage can go in these test systems, so he’ll note that as his baseline.

LINBIT DR speeds up the data replication

To recap, DRBD can only perform as well as its slowest component among the replication network and storage sub-systems of all peers. Adding a DR node whose replication network spans a 40Mb/s WAN link should therefore bottleneck your writes to 40Mb/s, even when using DRBD’s asynchronous protocol A. DRBD Proxy mitigates this bottleneck by acting as a buffer for your replication traffic. Matt will demonstrate that bottleneck by showing what happens with just DRBD stretched across long-distances, and then he’ll enable DRBD Proxy to observe the difference in performance.

First, he’ll extend his resource to the DR node using LINSTOR, and then set DRBD’s replication mode to asynchronous for each of the connections in the connection mesh.

This command will extend the DRBD device to the DR node. And then this command will set the protocol to asynchronous from each of our local nodes to our DR node.

Now you should see the performance has been limited by the addition of the DR node, until he enables DRBD Proxy.

He proves this by running the test again.

Now he’s getting about 5MB/s on the DRBD device, which is the throughput of his 40Mb/s WAN link. He’ll now use LINSTOR to enable DRBD Proxy for each connection in his mesh and adjust the buffer size to 100MB. Then, when he reruns the test, he should see that he is no longer being bottlenecked by the WAN link’s throughput.

He adjusts buffer size up to 100MB from the default of 32MB, as a good starting point for proxy buffer’s memory limit. Then reruns the test.

No bottleneck with LINBIT DR

There he sees the bottleneck has in fact been removed! Also, don’t worry if the commands he ran seemed a bit daunting, as the LINSTOR drivers for your cloud platform should handle most of that heavy lifting for you; dropping to the command line LINSTOR utility should only be required for more advanced tunings of resources.

To quickly recap, LINSTOR enabled him to extend his DRBD replicated volume across his WAN link. This adds disaster recovery capabilities to the already highly available block storage, and most importantly, it does so without impacting performance. Check out LINBIT’s other videos about installing LINSTOR with Kubernetes, OpenNebula, OpenStack, or as a stand-alone Software-defined Storage (SDS) platform for your environment. If you have questions about anything you’ve seen here, or about how LINSTOR would work in your project, head on over to LINBIT.com and reach out!

That’s all for now. Thank you for watching/following along and don’t forget to link and subscribe!