NuoDB and LINBIT® put our technologies together to see just how well they performed, and we are both happy with the results. We decided to compare LINSTOR® provisioned volumes against Kubernetes Hostpath (Direct Attached Storage) in a Kubernetes cluster hosted in Google’s cloud platform (GCP) to show that our on-prem testing results can also be proven in a popular cloud-computing environment.

Background:

NuoDB is an ANSI SQL standard and ACID transactional compliant container-native distributed OLTP database that provides responsive scalability and continuous availability. This makes it a great choice for your distributed applications running in cloud provider-managed and open source Kubernetes environments, such as GKE, EKS, AKS and Red Hat OpenShift. As you scale-out NuoDB Transaction Engines in your cluster, you’re scaling out the database’s capacity to process dramatically more SQL transactions per second, and at the same time, building in process redundancy to ensure the database — and applications — are always on.

As you scale your database, you also need to scale the storage that the database is using to persist its data. This is usually where things get sluggish. Highly-scalable storage isn’t always highly-performant, and it seems most of the time the opposite is true. Highly-scalable, highly-performant storage is the niche that LINSTOR® aims to fill.

LINSTOR, LINBIT’s SDS software, can be used to deploy DRBD® devices in large scale storage clusters. DRBD devices are expected to be about as fast as the backing disk they were carved from, or as fast as the network device DRBD is replicating over (if DRBD’s replication is enabled). At LINBIT we usually aim for a performance impact of less than 5% when using DRBD replication in synchronous mode.

The LINSTOR CSI (container storage interface) driver for Kubernetes allows you to dynamically provision LINSTOR provisioned block devices as persistent volumes for your container workloads… you see where I’m going… 🙂

Testing:

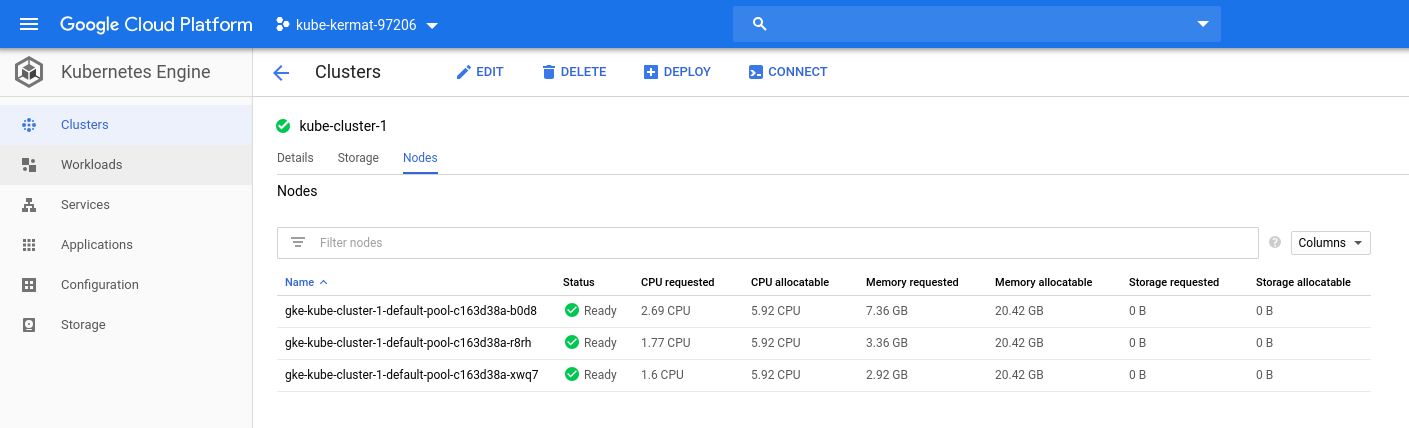

I spun up a 3-node GKE (Google’s Kubernetes Engine) cluster in GCP, and customized the standard node type with 6-vCPU and 22GB of memory for each node:

When using GKE to spin up a Kubernetes cluster, you’re provided with a “standard” storage class by default. This “standard” storage class dynamically provisions and attaches GCE standard disks to your containers that need persistent volumes. Those GCE standard disks are the pseudo “hostpath” device we wanted to compare against, so we deployed NuoDB into the cluster, and ran a YCSB (Yahoo Cloud Serving Benchmark) SQL workload against it to generate our baseline:

Using the NuoDB Insights visual monitoring tool (comes as standard equipment with NuoDB), we can see in the chart above we had 3 TEs (Transaction Engine) pods feeding into 1 SM (Storage Manager) pod. We can also see that our Aggregate Transaction Rate (TPS) is hovering just over 15K transactions per second. Also, as a side note, this deployment created 5-GCE Standard disks in my Google Cloud Engine account.

LINSTOR provisions its storage from an established LINSTOR cluster, so for our LINSTOR comparison, I had to stand up Kubernetes on GCE nodes “the hard way” so I could also stand up a LINSTOR cluster on the nodes (see LINBIT’s user’s guide or LINSTOR quickstart for more on those steps). I created 4-nodes as VM Instances in Kubernetes. 3-nodes were setup to mimic the GKE cluster, each with 6-vCPU and 22GB of memory, and our 1-master-node – with master node taint in Kubernetes so we will not schedule pods on this node – with 2-vCPU and 16GB of memory. Google recommended I scale these nodes back to save money, so I did that, resulting in the following VM instances:

After setting up the LINSTOR and Kubernetes cluster in the GCE VM Instances, I attached a single “standard” GCE disk to each node for LINSTOR to provision persistent volumes from, and deployed the same NuoDB distributed database stack and YCSB workload into the cluster:

After letting the benchmarks run for some time, I could see that we were hovering just under 15k, which is within the expected 5% of our ~15k baseline!

Conclusion:

You might be thinking, “That’s good and all, but why not just use GKE with the GCE-backed ‘standard’ storage class?” The answer is features. Using LINSTOR to provide storage to your container platform enables you to:

- Add replicas of volumes for resiliency at the storage layer – including remote replicas

- Use replicas of your volumes in DRBD’s read balancing policies which could increase your read speeds beyond what’s possible from a single volume

- Provide granular control of snapshots at either the Kubernetes or LINSTOR-level

- Provide the ability to clone volumes from snapshots

- Enable transparently encrypted volumes

- Provide data-locality or accessibility policies

- Lower managerial overhead in terms of the number of physical disks (comparing one GCE disk for each PV with GKE vs. one GCE disk for each storage node with LINSTOR).

Ultimately, the combination of NuoDB and LINSTOR enables clients to run high-performance persistent databases in the cloud or on premise with ease-of scale and “always-on” resiliency. So far, after testing both proprietary and open-source software, NuoDB has found that LINSTOR’s open-source SDS is a production-ready, high-performance, and highly reliable storage solution to provision persistent volumes.